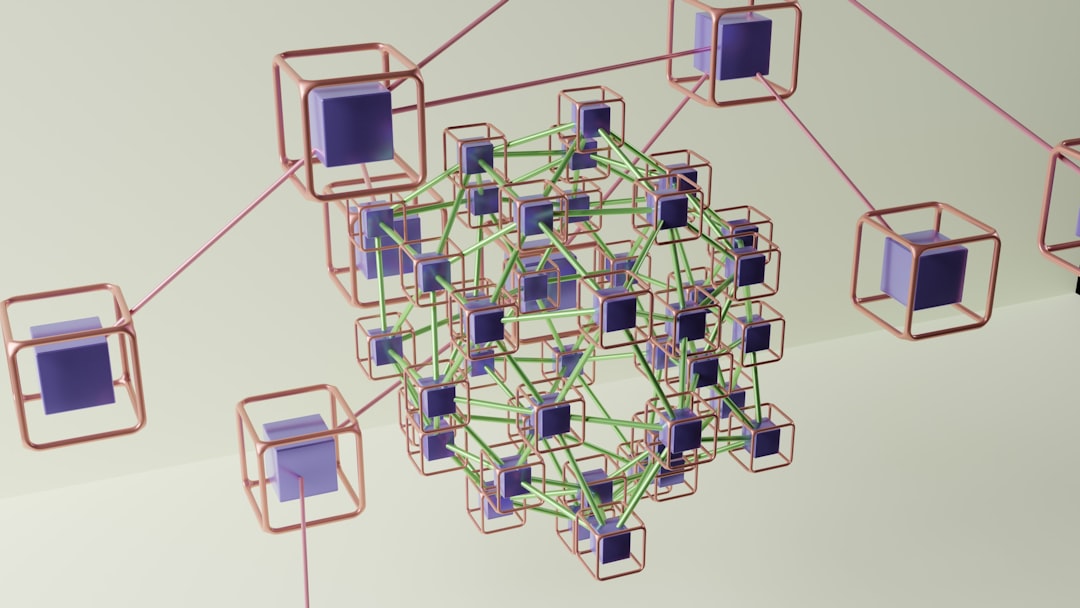

As concerns about data privacy, regulatory compliance, and cross-border data transfers continue to grow, organizations are rethinking how they train machine learning models. Traditional centralized training requires collecting raw data into a single repository—an approach that increases security risks and often violates privacy regulations. Federated learning offers a smarter alternative by enabling distributed model training while keeping raw data local.

TLDR: Federated learning allows organizations to train AI models without sharing raw data by distributing training across devices or servers. This approach improves privacy, enhances security, and supports regulatory compliance. Several powerful tools now make federated learning practical for real-world deployments, ranging from enterprise-grade frameworks to research-oriented platforms. Below are seven leading federated learning tools and how they compare.

Instead of moving sensitive data into a central server, federated learning sends a model to the data, trains it locally, and aggregates only the model updates. The result is collaborative intelligence without centralized data collection — a critical advantage in industries like healthcare, finance, telecommunications, and mobile applications.

1. TensorFlow Federated (TFF)

TensorFlow Federated is one of the most widely adopted frameworks for federated learning research and prototyping. Built on top of TensorFlow, it provides tools to simulate federated environments as well as deploy real-world distributed training workflows.

Key features:

- Integration with TensorFlow ecosystem

- Simulation environment for large-scale experiments

- Support for custom federated algorithms

- Flexible research-oriented design

TFF is ideal for researchers and developers who already work within the TensorFlow stack. However, it may require additional engineering effort for production deployment.

2. PySyft

PySyft, developed by OpenMined, extends PyTorch to support privacy-preserving techniques such as federated learning, secure multi-party computation, and differential privacy.

Key features:

- Works seamlessly with PyTorch

- Support for encrypted computations

- Remote execution and secure model training

- Strong developer community focused on privacy

PySyft is particularly suited for teams that prioritize privacy research and cryptographic enhancements alongside federated training.

3. Flower (FLWR)

Flower is a flexible and framework-agnostic federated learning framework that supports PyTorch, TensorFlow, JAX, and more. Its simplicity and production-ready architecture make it popular among startups and enterprises alike.

Key features:

- Framework-agnostic design

- Scalable to millions of clients

- Easy deployment on cloud or edge devices

- Customizable federated strategies

Flower emphasizes usability and scalability, making it one of the most practical tools for real-world implementation.

4. NVIDIA FLARE

NVIDIA FLARE (Federated Learning Application Runtime Environment) is an enterprise-grade platform built for secure, scalable deployment of federated learning in regulated industries.

Key features:

- Enterprise security architecture

- Support for healthcare and financial applications

- Scalable orchestration tools

- Built-in privacy-enhancing techniques

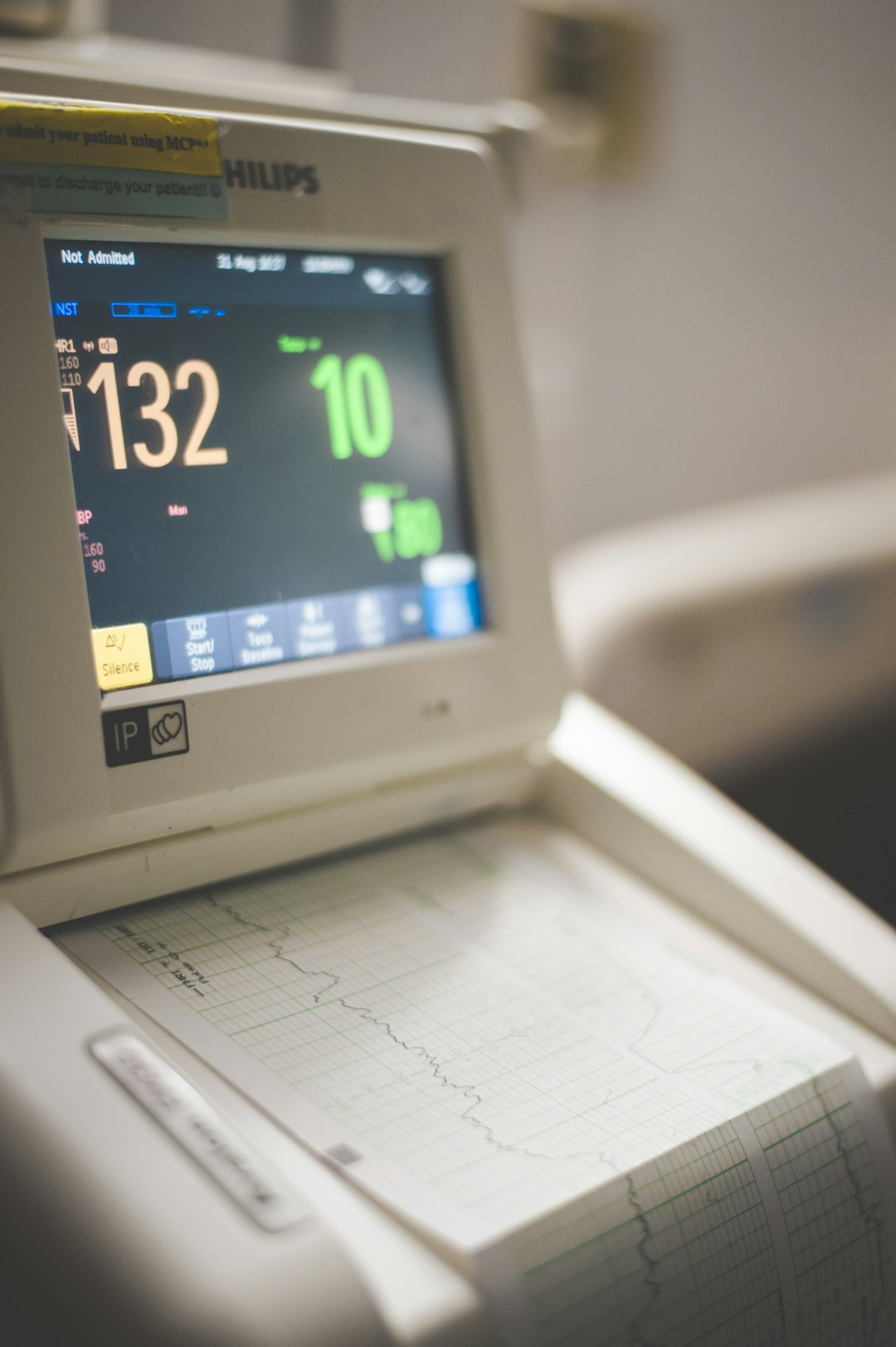

NVIDIA FLARE is particularly strong in medical AI applications, where hospitals can collaborate on model training without exposing patient data.

5. IBM Federated Learning

IBM offers a federated learning solution designed for enterprise AI environments. It integrates with IBM’s broader data governance and AI lifecycle management tools.

Key features:

- Enterprise-ready orchestration

- Regulatory compliance support

- Secure aggregation mechanisms

- Integration with enterprise AI ecosystems

This tool is well-suited for financial institutions and large enterprises seeking compliance-ready deployments.

6. FATE (Federated AI Technology Enabler)

FATE is an open-source project originally developed to support secure collaboration across organizations. It is widely used for cross-silo federated learning scenarios.

Key features:

- Industrial-scale architecture

- Secure multi-party computation support

- Heterogeneous federated learning

- Strong adoption in financial sectors

FATE works particularly well when multiple independent organizations need to collaborate while maintaining full data sovereignty.

7. OpenFL

Open Federated Learning (OpenFL) is an open-source framework focused on secure and scalable federated workflows. It is designed for cross-institution collaboration, especially in healthcare and research.

Key features:

- Secure task scheduling

- Extensible architecture

- Cross-silo collaboration support

- Edge and server-based federated training

OpenFL aims to standardize best practices in distributed model training without centralizing data, making it suitable for research consortia.

Comparison Chart: Top Federated Learning Tools

| Tool | Primary Use | Framework Support | Best For | Production Ready |

|---|---|---|---|---|

| TensorFlow Federated | Research & Simulation | TensorFlow | Academic & Research Teams | Moderate |

| PySyft | Privacy-Focused ML | PyTorch | Privacy Research | Moderate |

| Flower | Scalable Deployment | Multi-framework | Startups & Enterprises | High |

| NVIDIA FLARE | Enterprise AI | Multi-framework | Healthcare & Regulated Industries | Very High |

| IBM Federated Learning | Enterprise Governance | Enterprise AI Stack | Large Corporations | Very High |

| FATE | Cross-Organization ML | Multi-framework | Financial Institutions | High |

| OpenFL | Research Collaboration | Flexible | Research Consortia | High |

Why Federated Learning Matters

Federated learning is more than a privacy trend — it represents a structural shift in how AI systems are built. By eliminating centralized data collection, organizations reduce:

- Attack surfaces for cyber threats

- Regulatory risks related to GDPR, HIPAA, and similar laws

- Data transfer costs across regions

- Customer trust concerns about data misuse

Additionally, federated learning often enables collaboration between competitors or institutions that would otherwise be unable to share raw datasets. Hospitals can jointly train diagnostic models. Banks can improve fraud detection systems. Mobile device manufacturers can enhance personalization algorithms — all without revealing proprietary or sensitive data.

How to Choose the Right Tool

Selecting the correct federated learning platform depends on several factors:

- Technical stack: Does the team primarily use PyTorch, TensorFlow, or multiple frameworks?

- Deployment scale: Is the solution targeting millions of edge devices or a handful of institutions?

- Compliance requirements: Are strict regulatory standards involved?

- Security needs: Is additional encryption or secure multi-party computation required?

- Production maturity: Is the environment experimental or enterprise-grade?

Organizations in research settings may prioritize flexibility and experimentation, while enterprises often seek robust orchestration, auditing, and compliance features.

Frequently Asked Questions (FAQ)

1. What is federated learning in simple terms?

Federated learning is a distributed machine learning approach where models are trained locally on devices or servers using local data, and only model updates—not raw data—are shared and aggregated.

2. Is federated learning completely secure?

While federated learning significantly improves privacy by keeping raw data local, it is not automatically immune to all attacks. Techniques such as differential privacy and secure aggregation can further enhance security.

3. What industries benefit most from federated learning?

Healthcare, finance, telecommunications, government, and mobile technology sectors benefit the most because they handle highly sensitive or regulated data.

4. Can federated learning work on mobile devices?

Yes. Federated learning was initially popularized for mobile environments, where devices train models locally and share updates with a central server.

5. How does federated learning differ from distributed learning?

Distributed learning often assumes centralized data spread across nodes for processing, whereas federated learning keeps data strictly local and only shares model parameters.

6. Is federated learning suitable for small businesses?

Yes, especially with flexible tools like Flower or open-source frameworks, smaller organizations can implement federated models without massive infrastructure investments.

7. Does federated learning reduce compliance burden?

It can significantly reduce compliance risks since raw data never leaves its source, but organizations must still implement proper governance and security practices.

As privacy expectations and AI adoption continue to evolve, federated learning tools are becoming essential components of responsible machine learning infrastructure. With the right framework, organizations can unlock collaborative intelligence while keeping sensitive data exactly where it belongs — secure and local.